Everyone expected a smooth ride, right? When you hear ‘TensorFlow,’ you picture this powerful, accessible tool for deep learning. But for those of us building things on Windows, the rug got pulled. Since 2022, native TensorFlow support? Gone. Poof. So, what now?

The Great Windows Migration

Look, the tech world loves a good buzzword, but ‘native support’ is a pretty fundamental one to lose. The expectation was that you’d just install it and go. Now, if you’re on Windows and you want to play with TensorFlow — and let’s be honest, it’s still one of the heavy hitters in the deep learning toolbox — your first stop isn’t the TensorFlow website for a Windows installer. It’s the Windows Subsystem for Linux (WSL2) documentation.

This isn’t just a minor inconvenience; it forces a significant shift in how developers approach setting up their environment. Instead of a straightforward installation, it’s a two-stage process: get your Linux environment cooking within Windows, then get TensorFlow running there. It’s not insurmountable, especially with tools like WSL2 making it smoother than it used to be, but it definitely adds a layer of complexity that wasn’t there before.

A Real-World Test Drive

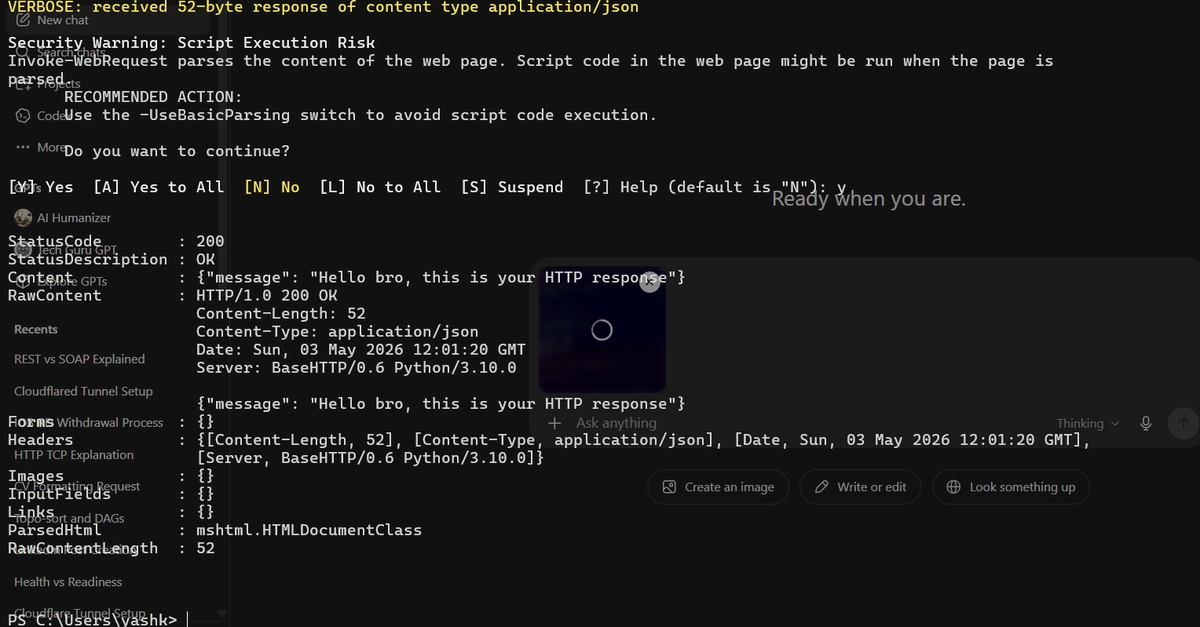

A recent project illustrates this new reality perfectly. The goal: apply neural network and math knowledge to a real-world problem. The opportunity: a Talent Day challenge from Evolve. The snag? Setting up TensorFlow on a Windows machine meant first wrestling with WSL2. This involved creating an isolated Python 3.12 environment and, crucially, ensuring TensorFlow could actually see the Nvidia drivers. That last bit — getting GPU acceleration to work across the Windows-Linux boundary — is where many developers can get tripped up. Following a specific YouTube video (https://www.youtube.com/watch?v=LHtNv-dq8I4&list=LL&index=2&t=1034s) became a necessity, not just an option.

So, the ‘toolbox’ is ready, but the workbench is now a bit more… engineered. The question then becomes: who is actually benefiting from this shift? Is it the end-user developer who now has a more convoluted setup, or is it TensorFlow, or Microsoft, by further entrenching the use of WSL2?

Fashion Forward, Code Backward?

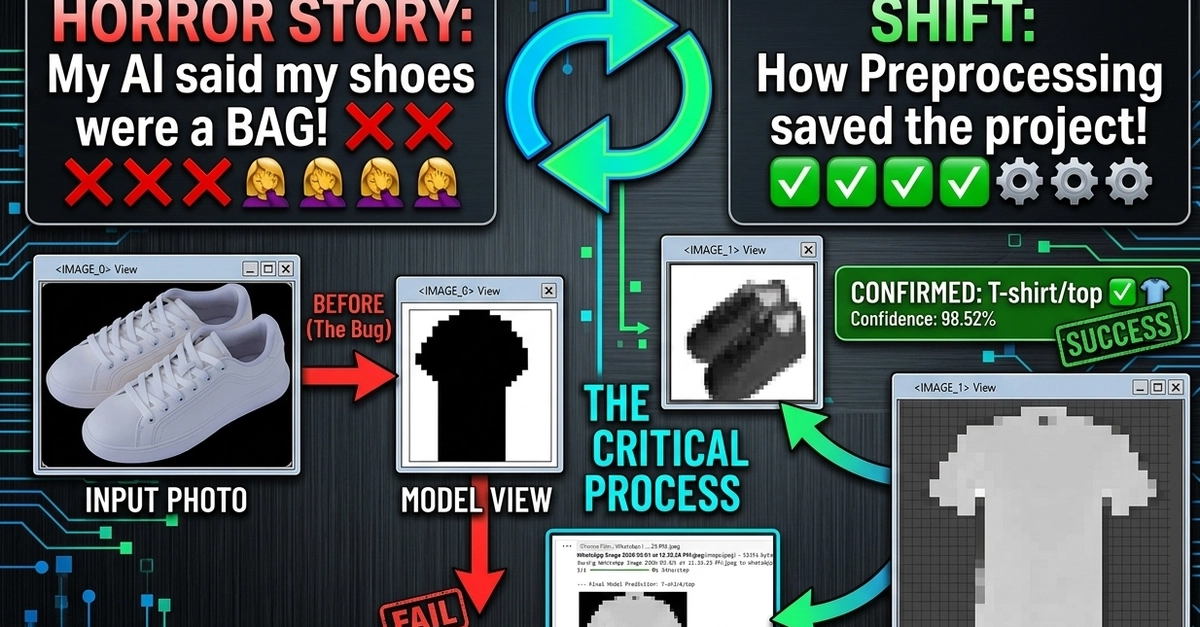

With the environment prepped, the next hurdle: the dataset. The choice? Zalando’s Fashion MNIST. It’s a familiar starting point for many, offering around 70,000 images of clothing, each 28x28 pixels in grayscale, neatly categorized into 9 classes. It’s a solid, manageable dataset for learning and testing, and it’s freely available.

The choice of Convolutional Neural Networks (CNNs) is, frankly, the no-brainer part of this equation. For image processing tasks, especially with grid-like data such as photos, CNNs are the bread and butter. They’re designed precisely for this kind of pattern recognition.

And the results? Pretty darn good. An accuracy rate between 93% and 95% after 30 epochs isn’t shabby at all. The confusion matrix and the full GitHub repository (https://github.com/raulrevidiego/Clasificador-Zalando/tree/main) are available for those who want to dive deep into the specifics.

The opportunity had presented itself and here I tell you how I developed the project.

The Bottom Line: Who Wins?

This project highlights a subtle but significant shift. TensorFlow, once a more straightforward choice across platforms, now nudges Windows users firmly into the Linux ecosystem via WSL2. For developers, it means an extra step, a bit more sysadmin grunt work to get going. The upside? Potentially more strong and consistent environments when things do work. But the overhead is real.

From a business perspective, it’s a smart play for Microsoft, driving adoption of WSL2, and for Google, by keeping developers engaged with TensorFlow, even if it means a slightly more circuitous route on Windows. The real question isn’t whether TensorFlow is still powerful — it is. It’s whether this forced migration, this implicit requirement of WSL2 for Windows users, makes the barrier to entry higher than it needs to be for the next wave of aspiring deep learning engineers.

It makes you wonder if the grand vision of universally accessible AI tooling is being subtly re-routed through specific platform dependencies, prioritizing one ecosystem over another. And for developers just trying to get their hands dirty with cool tech, that’s a detail worth paying attention to.