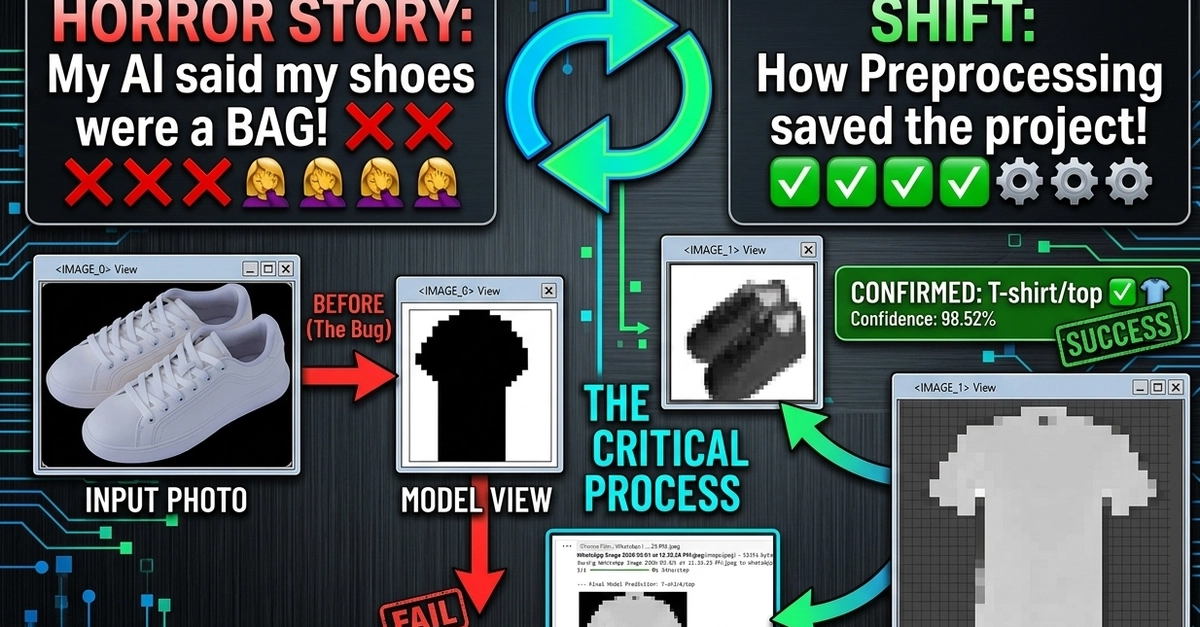

Everyone expected AI to nail the basics. You throw it a bunch of images, it spits out labels. Simple, right? Especially with something as curated as the Fashion-MNIST dataset – basically, a deconstructed MNIST but with clothes. The promise was a quick, clean demonstration of image classification, the kind of thing you’d show off in a demo. But what if the demo breaks? What if your shiny new model, built with the industry-standard TensorFlow and Keras, looks at a picture of your favorite worn-in sneakers and declares it… a bag? Yeah, that’s what happened.

It’s a story as old as silicon itself: the hype machine churns, promising the moon, and then reality, in all its pixelated glory, stomps on the PR narrative. The expectation was a smooth sail through a well-trodden dataset. The reality was a messy debugging session that, as it turns out, taught more than a pristine walkthrough ever could.

The ‘Bag’ Incident: When Pixels Aren’t Enough

So, the setup: a Sequential Neural Network, the kind that’s basically a stack of logic gates for beginners. Flatten layers, Dense layers, Dropout layers – all the standard toolkit for your first foray into machine learning. Python, NumPy, Matplotlib. All humming along. The code itself is probably fine, the kind you’d copy-paste from a hundred tutorials. And it worked, sort of. For the pre-packaged Fashion-MNIST data, sure, it could tell a shoe from a shirt with reasonable accuracy. The industry loves this stuff for inventory, trend spotting, those ‘search by image’ features that are supposed to make shopping easier. That’s where the money is, after all.

But then the real test. Upload your own photos. And bam. Sneakers? Bag. A different shoe? T-shirt. This isn’t just a minor hiccup; it’s a fundamental disconnect. It’s like asking someone who’s only ever seen black-and-white drawings to describe a sunset. They understand color exists, maybe, but they can’t see it.

Spatial Intelligence: The Missing Piece

This is where the facade cracks. The model, that simple Dense network, lacks something critical: spatial intelligence. It’s not seeing the shape of a sneaker, the curve of a sole, the laces. It’s just crunching numbers from pixel intensity. It treats every pixel as an independent data point, oblivious to the bigger picture – the actual form of the object. This is the core of the problem, and why the author had to go back to the drawing board.

It’s a classic example of a model that’s overfit to the idealized training data. Fashion-MNIST is clean, centered, perfectly scaled. Real-world photos? They’ve got shadows, angles, backgrounds, and imperfections. The model had never learned to filter that out or focus on what truly matters.

Beyond the Hype: Real-World AI Engineering

So, what’s the fix? It’s not about a magic algorithm. It’s about grunt work and understanding limitations. Refine preprocessing: making the images less noisy, enhancing features so the important bits stand out. Normalize input: getting those images to the exact size and orientation the model expects, like feeding a picky eater only their favorite foods. And the big one: acknowledging that a simple neural network isn’t enough for complex visual tasks. You need more sophisticated architectures, like Convolutional Neural Networks (CNNs), which are actually designed to recognize patterns and shapes in images.

After multiple iterations of code and preprocessing “jugaads,” I finally got the model to recognize the patterns! It taught me that AI isn’t magic—it’s about high-quality data and the right architecture.

This whole episode is a valuable reminder that for all the talk of AI automating everything, the messy, human-driven engineering part is still paramount. The “jugaad” – that Indian term for an ingenious, often unconventional, fix – is what separates a toy demo from a functional system. It’s not about finding a single perfect model, but about iterative improvement, deep understanding of data limitations, and choosing the right tools for the job. Who is actually making money here? The companies that can translate these messy, real-world data challenges into strong, scalable AI solutions. Not the ones selling simple demos.

Why Does This Matter for Developers?

Developers looking to build anything beyond a basic proof-of-concept need to internalize this. The difference between a model that works on clean datasets and one that performs in the wild is vast. It means investing time in data cleaning, understanding the architectural trade-offs (MLP vs. CNN, for instance), and embracing the trial-and-error nature of AI development. It’s about the discipline to go beyond copy-pasting and truly understand why a model fails and how to fix it. The industry isn’t just about deploying the latest large language model; it’s about the fundamental engineering of making these systems work reliably, in the messy reality of user-generated content.

🧬 Related Insights

- Read more: Grafana’s AI Sidekick Eyes Your Private Business Metrics—Secure Enough?

- Read more: PeachBot: Edge AI That Actually Survives the Real World

Frequently Asked Questions

**What is the Fashion-MNIST dataset?

Fashion-MNIST is a dataset of Zalando’s article images, used for training image classification models. It contains 70,000 grayscale images of 10 fashion categories (like T-shirts, trousers, sneakers, bags, etc.), with 60,000 for training and 10,000 for testing.

**What’s the difference between an MLP and a CNN for image classification?

MLPs (Multi-Layer Perceptrons), or Dense networks, treat input features independently. CNNs (Convolutional Neural Networks) use convolutional layers to detect spatial hierarchies of features, making them much better at understanding shapes, textures, and patterns in images, thus more suitable for tasks like image recognition.

**Is this AI problem solved for real-world fashion?

While basic image classification on clean datasets is well-established, real-world fashion AI still faces challenges. Recognizing items accurately across diverse lighting, angles, brands, and styles, especially with user-uploaded photos, requires highly sophisticated models and extensive, well-curated datasets.