When was the last time a database started a conversation with you? Probably never. And yet, at Google Cloud Next ‘26, something quietly revolutionary happened – something that might actually make our AI agents more useful, not just louder. While everyone was mesmerized by the agent-powered snowboarder (and who wouldn’t be?), Google slipped a massive piece of infrastructure plumbing into general availability: making your production databases talk directly to AI agents, no complex bridges required.

This isn’t just about making a chatbot more knowledgeable; it’s about democratizing sophisticated AI application development. Imagine an AI agent that doesn’t just know about your inventory, but can check it, update it, and predict future stock needs—all in real-time, without a legion of engineers wrestling with proxy servers and authentication headaches at 3 AM.

The Pain of Data Integration

Building AI agents that can interact with real-world data—not just dummy JSON files—has been a Herculean task. You’d need to set up and manage your own MCP (Model Context Protocol) server, meticulously handle authentication (API keys? OAuth? IAM? Good luck!), and then pray your agent doesn’t accidentally overload your database with a million concurrent connections. This was the messy, often-overlooked infrastructure layer that kept sophisticated AI applications grounded in theory rather than deployed in practice. Google Cloud’s announcement tackles this head-on.

What Exactly Dropped?

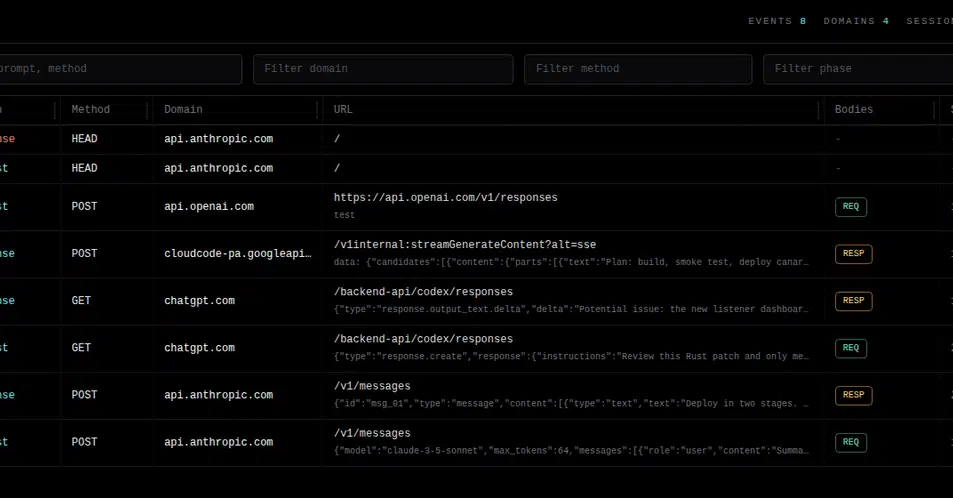

At Next ‘26, Google Cloud revealed that managed, remote MCP servers are now generally available for a strong lineup of their database services: AlloyDB (PostgreSQL-compatible), Cloud SQL, Spanner, Firestore, and Bigtable. Even better, they’re in preview for Memorystore, Database Migration Service, Datastream, and Database Center, hinting at even broader integration. And for those of us wrestling with code, there’s a new Developer Knowledge MCP server that hooks your IDE directly into Google’s documentation. Your coding agent can now pull live, relevant context, sidestepping those maddening hallucinations that plague less-informed AI.

The setup process is, frankly, almost anticlimactic in its simplicity. A single gcloud command to enable a Spanner MCP endpoint, and bam! Your agent can now converse with your Spanner database using natural language, whether it’s Gemini CLI, Claude, or ChatGPT. No server deployment, no auth plumbing to debug. It’s as if the database suddenly learned to speak.

gcloud beta services mcp enable spanner.googleapis.com --project=${PROJECT_ID}

And then, in your agent configuration:

{

"mcpServers": {

"spanner": {

"url": "https://spanner.googleapis.com/mcp",

"authType": "oauth"

}

}

}

The Security Model: Surprisingly Solid

My immediate reaction to hearing “connect your AI agent to your production database” was, predictably, a slight tremor of dread. This is the stuff of late-night incident calls. But Google’s approach here is remarkably secure. Authentication is entirely managed via IAM. This means no hardcoded connection strings, no shared API keys floating around like digital confetti. Agents are granted access only to the specific tables or views an IAM policy permits. Every query is logged, and audit trails are automatic, fitting neatly into Google Cloud’s existing observability stack. You can spin up a dedicated service account for an agent, grant it precisely the read-only access it needs, and yank that access away in an instant. This is the kind of security posture that finally makes deploying these agents into production feel less like a gamble and more like a calculated strategy.

Spanner’s Multi-Model Magic

The integration with Spanner is particularly fascinating. Spanner isn’t just a relational database anymore; it’s a multi-model powerhouse supporting graph, vector search, and full-text search alongside its relational capabilities. The MCP integration unlocks all of these through natural language. Imagine asking your agent to: “Find all accounts that received transfers from account 12345 within the last 48 hours, and check if any of them share a phone number with a flagged account.” This isn’t just a simple SQL query; it’s a complex multi-hop graph traversal fused with a relational join. With the Spanner MCP server, your agent can generate and execute this complex query automatically. Google even offers a codelab demonstrating precisely this fraud detection use case, showcasing the natural-language-to-graph-query pipeline in action.

The Open Source Counterpart: MCP Toolbox

Complementing the managed services, Google has also released MCP Toolbox for Databases v1.0, the stable GA of their open-source MCP server. This supports over 40 databases, with contributions from numerous vendors—including Neo4j, PostgreSQL, MySQL, and SQLite, not just Google’s own offerings. This dual approach—managed services for GCP-native teams and the open-source toolbox for hybrid or multi-cloud environments—makes the offering genuinely useful across a broader spectrum of developers.

My Honest Take

The hype machine around AI agents often focuses on the flashy demos. But the real revolution isn’t in agents that can talk; it’s in agents that can do. Google’s move to simplify the database connection is a massive step towards that future. It abstracts away immense complexity, enabling developers to focus on the intelligence layer rather than the plumbing. This isn’t just a feature; it’s a foundational platform shift, akin to the early days of cloud computing or containerization. It signals a future where your data isn’t just stored; it’s an active participant in your AI applications. This, I believe, is where the true, quiet revolution lies.

Why This Matters for Developers

This is the kind of plumbing that has historically consumed developer time and budget. By abstracting away the infrastructure, authentication, and scaling concerns for connecting AI agents to databases, Google Cloud is essentially handing developers a powerful, ready-to-use tool. It lowers the barrier to entry for building sophisticated AI-powered applications. For teams already invested in Google Cloud databases, the integration is virtually frictionless. For others, the open-source MCP Toolbox offers a path to integrate with their existing or multi-cloud infrastructure. The ability for an AI agent to directly query and act upon production data in a secure, managed way is a genuine leap forward in making AI practical for everyday development tasks.

🧬 Related Insights

- Read more: Docker’s Spaceship Magic: My Bootcamp Ride from Panic to ‘It Just Works’

- Read more: Docker Commandos Deal Cards Against CVEs: The Arcade That’s Sneakily Genius

Frequently Asked Questions

Will this make my job as a database administrator obsolete? Not at all. This technology augments, rather than replaces, the DBA role. It allows for more sophisticated automation and integration but still requires careful management of data, security, and performance. The focus may shift towards overseeing AI agent interactions and ensuring data integrity at a higher level.

Is this feature only for Google’s proprietary AI models? No. MCP (Model Context Protocol) is an open standard. This means that any AI agent or client that complies with MCP can use these managed MCP servers to connect to your databases, including popular models like OpenAI’s ChatGPT and Anthropic’s Claude, alongside Google’s Gemini.

What kind of data can my AI agent access? Your AI agent can access the data within the specific databases for which you’ve enabled MCP integration. Access is controlled by IAM policies, meaning you can grant granular permissions, such as read-only access to particular tables or views, ensuring that your agent only accesses the data it needs and is authorized to see.