Reports suggest AI code generation can easily hit thousands of lines per engineer per day. Thousands. Per day. If your team’s embracing the AI code revolution, congratulations. You’ve probably moved past the initial setup and seen some initial gains. But now, the real fun begins. The ‘Day 2’ problems. The ones that emerge when the shiny new AI tools aren’t just novelties anymore, but the engine driving your actual output.

This isn’t about getting people to use the AI. That’s Day 1. This is about what happens when adoption scales and the code starts… well, moving. And inevitably, breaking in places you didn’t see coming.

The Code Review Conundrum

Code review. The bane of many engineers’ existence. And AI? It’s just made it worse. Faster code generation means more commits, more PRs, more lines of code piling up in review queues. Already a chore, now it’s a tidal wave.

Members of his team had started distrusting AI because of the quality of code coming through in PRs. Not because AI can’t write decent code, but because engineers were submitting AI output without reviewing it themselves first.

This is the crux of it. Engineers, blinded by the speed, are shoving AI-generated code out the door without a second glance. The PR becomes the first time anyone scrutinizes the output. Senior engineers are left with an impossible choice: comb through 5,000 lines of AI-generated code with the same rigor as bespoke human work, or skim and feel irresponsible. Neither option is good.

Automating the Obvious (and Hoping for the Best)

So, what’s the fix? Look at your CI/CD. Automate what you can. Tools like CodeRabbit, Greptile, and Cursor’s Bugbot can catch surface-level stuff: style, obvious bugs, missing tests. They don’t replace humans. They just reduce the sheer volume of garbage humans have to sift through.

But be warned. AI code review tools are rife with false positives. Coaching junior engineers to spot these phantom issues is key. Teach them why it’s not a problem, or why it’s an acceptable risk. Otherwise, you’re just swapping one form of noise for another.

Here’s a thought that feels deceptively simple: make engineers review their own PRs before submitting. A pre-review review. The quality of code, AI or not, reflects on the author. If your team doesn’t have this as a norm, now’s the time to bake it in.

Upstream Bottlenecks: The Ticket Troubles

Code review is a downstream constraint. But the upstream planning phase? That’s where AI hasn’t made a dent. Ticketing, design, requirements gathering – none of that got faster.

Vague tickets are death by a thousand papercuts. Clarifying questions. Back-and-forths. Delays. Clear acceptance criteria, reproduction steps, system context – these aren’t AI problems. They’re fundamental software engineering problems that AI highlights by making the coding part seem easy.

This applies to all the “paperwork.” Status updates, handoff notes, design docs. The connective tissue. It’s not glamorous, but it’s where teams bleed time.

What About Those Tickets?

Experimentation is key. Build a skill for your AI assistant. Have it pull a ticket from JIRA or Linear. Run it through quality checks: clear objective? Requirements? Business impact? Stakeholder named? For bugs, do you have reproduction steps? If the ticket fails these checks, send it back. Don’t let vague requirements fuel AI-generated code that’s destined to fail.

This is where the management side gets interesting. Budget surprises. Senior engineers stuck reviewing instead of building. And “quality” suddenly meaning something different to everyone. AI doesn’t solve these problems; it amplifies them.

The Historical Parallel We’re Ignoring

This feels suspiciously like the early days of enterprise software. Remember when companies bought ERP systems thinking they’d magically fix everything? They didn’t. They just made existing bad processes faster and more visible. AI code generation is the same. It’s not a magical elixir; it’s an accelerant for whatever system you already have.

If your system is broken, AI will just make it break faster. And that, my friends, is the real ‘Day 2’ problem.

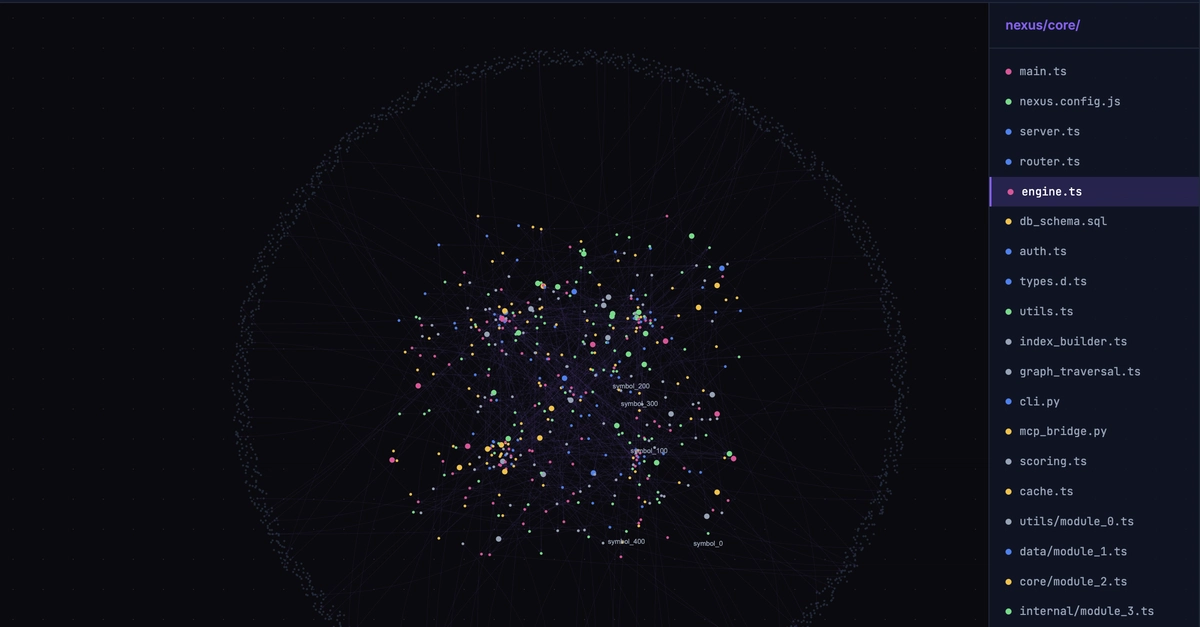

Does AI Replace Developers?

Not directly. But it certainly changes the job. Developers will need to focus more on higher-level problem-solving, architecture, and critical review of AI-generated code, rather than just writing boilerplate. The demand for those who can effectively prompt, review, and integrate AI output will increase.