Open-weights rule.

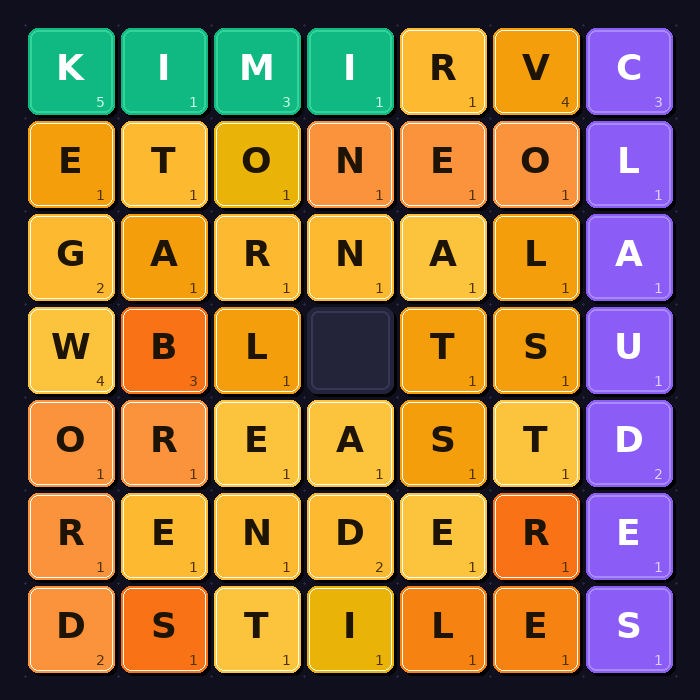

That’s the seismic, and frankly, quite exciting, takeaway from the latest AI Coding Contest, specifically Day 12’s Word Gem Puzzle. Rohana Rezel, running the ongoing challenge that pits major language models against real-time programming tasks, dropped the results, and they’re a punch in the gut to the usual suspects. Kimi K2.6, an open-weights model from Chinese startup Moonshot AI, didn’t just participate; it dominated, securing an outright win with 22 match points and a 7-1-0 record. For context, this means it beat everything else, including what are supposedly the top-tier models from Western AI labs.

The Unkindest Cut: Western Labs Lag

The pecking order of AI models has long been a subject of fervent speculation and intense corporate positioning. OpenAI’s GPT series, Anthropic’s Claude, and Google’s Gemini have been the undisputed heavyweights, their perceived superiority often taken as gospel. Yet, in this meticulously structured programming challenge, the results were starkly different. MiMo V2-Pro from Xiaomi snagged second place, followed by GPT-5.5 in third, and Claude Opus 4.7 limping in at fifth. Every single model from the perceived Western “frontier labs” — the titans of AI development — finished below the top two spots. This isn’t a subtle shift; it’s a wholesale restructuring of the narrative.

How the Puzzle Works, and Why It Matters

The Word Gem Puzzle itself is a fascinating testbed. It’s a sliding-tile letter puzzle with a twist: players (or bots) manipulate a grid, sliding tiles into a blank space to form valid English words horizontally or vertically. The scoring is crucial: it penalizes short words heavily (a three-letter word costs you three points, five-letter costs one) while rewarding longer ones (seven letters or more score their length minus six). This encourages complex word formation and strategic board manipulation, not just brute-force pattern recognition. It’s a challenge that demands not only language understanding but also planning, foresight, and adaptive strategy—qualities we often associate with sophisticated intelligence.

The grids, seeded with real words and then filled with letter tiles based on Scrabble frequencies, are scrambled more aggressively on larger boards. This means that on smaller grids (10x10), many original words might survive. But on a 30x30 grid, the initial structure is largely obliterated, forcing models to construct words from scratch, a far more demanding task. This procedural difference is key to understanding Kimi’s success.

Kimi’s Greedy Gambit Pays Off

What did Kimi K2.6 do that the others didn’t? The move logs, the raw data of the contest, tell a compelling story. Kimi employed an aggressive, greedy strategy: it continuously evaluated each possible slide based on the new positive-value words it could unlock. If no such move existed, it fell back to a default move. This approach, while leading to some inefficient “edge-oscillation” (bouncing the blank back and forth without progress) on smaller boards, proved devastatingly effective on the larger, more scrambled grids. Here, where word reconstruction was the only path to points, Kimi’s sheer volume of effective slides, leading to a cumulative score of 77, was the deciding factor.

In contrast, MiMo V2-Pro, despite its second-place finish, had a surprisingly brittle strategy. Its code, while present, never triggered a slide because its “best value greater than zero” threshold never activated. It essentially scanned the initial grid for existing words of seven letters or more and fired all its claims in a single go. This worked beautifully on grids where the scramble left intact seed words but yielded nothing where it didn’t. It’s a strategy that’s entirely dependent on luck of the initial board state, rather than adaptive play.

The Stagnation of the Giants

And the established players? Claude Opus 4.7, according to the logs, also didn’t slide. Its performance held up on 25x25 boards where the scramble was manageable, but it “fell apart on 30x30 where actual tile movement was needed.” This is a fundamental limitation in a puzzle explicitly built around sliding. GPT-5.5 was more conservative, showing strength on 15x15 and 30x30 grids, but its overall approach appears less dynamic than Kimi’s winning formula.

It’s a stark reminder that innovation isn’t solely the domain of the incumbents with the deepest pockets and the most data. The fact that an open-weights model, available to anyone who wants to inspect and build upon it, can outperform closed, proprietary systems in a specific, well-defined task is hugely significant. It hints at architectural efficiencies, novel training methodologies, or perhaps a more focused approach to problem-solving that the larger labs might be missing in their pursuit of general intelligence.

My unique insight here? The conventional wisdom that “bigger is always better” in AI is being actively challenged not just by clever engineering, but by a renewed focus on task-specific optimization and, crucially, accessibility. Open-weights models, when developed with a clear purpose and strong evaluation, can indeed leapfrog their more monolithic counterparts. This is not just about one contest; it’s a signal about the future of AI development: a potential democratization fueled by accessible, high-performing foundational models.

Why Does This Matter for Developers?

This isn’t just an academic curiosity. For developers, the rise of models like Kimi K2.6 means more powerful, potentially more cost-effective tools are becoming available. The fact that Kimi is open-weights is the real game-changer. It invites scrutiny, modification, and integration in ways that closed APIs simply can’t. Imagine fine-tuning a model that has already proven its mettle in complex coding tasks, tailoring it for your specific application development needs without the gating of proprietary access. It lowers the barrier to entry for sophisticated AI-powered development, encouraging a more distributed, innovative ecosystem. The implications for specialized AI agents, code generation tools, and even the fundamental way we interact with development environments are profound.

FAQ

What is Kimi K2.6? Kimi K2.6 is an open-weights language model developed by Moonshot AI, a Chinese startup. It recently outperformed models like Claude Opus and GPT-5.5 in an AI coding challenge.

Why is Kimi K2.6’s performance significant? Its performance is significant because it’s an open-weights model that beat established, proprietary models from major Western AI labs in a complex coding task, suggesting a shift in AI development and accessibility.

Will this open-weights model replace my job? While AI tools can automate certain tasks, they are more likely to augment developer roles by handling repetitive coding, debugging, and complex problem-solving, allowing humans to focus on higher-level design, architecture, and creativity. The rise of powerful open-weights models can democratize access to advanced AI capabilities for developers.