☁️ Cloud & Infrastructure

Real AI Agent Security Test: LLM Spotted the Hack, Tools Ignored It

Everyone figured modern LLMs had security licked. Then agent-probe hit a real AI agent—and exposed a killer flaw in the tool layer.

DevTools Feed

Apr 04, 2026

3 min read

⚡ Key Takeaways

-

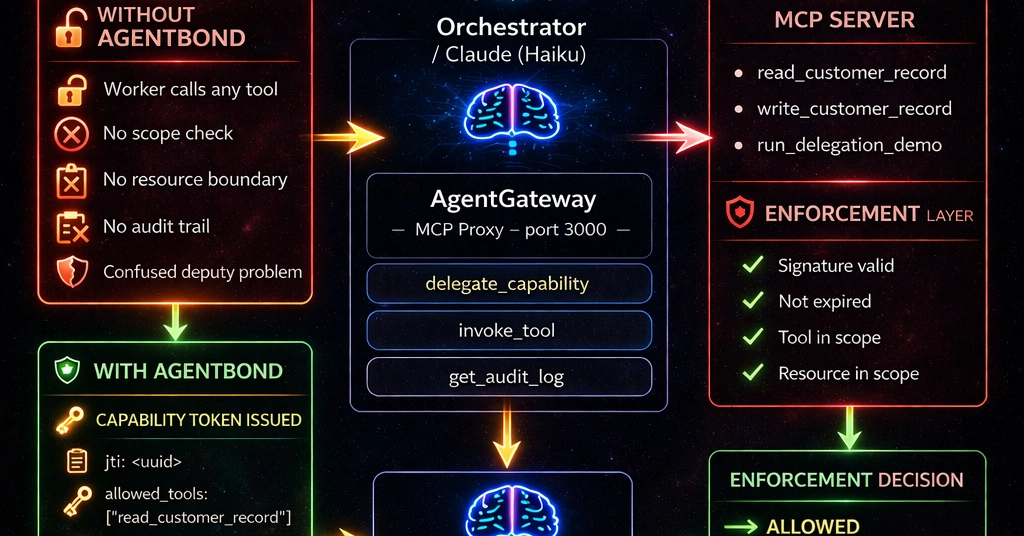

Modern LLMs block LLM-level attacks, but tool layers execute malicious args blindly.

𝕏

-

Agent-probe v0.6.0 adds critical input validation probes for SQLi, SSRF, path traversal.

𝕏

-

The real security gap is the 200ms between LLM decision and tool run—no framework validation.

𝕏

The 60-Second TL;DR

- Modern LLMs block LLM-level attacks, but tool layers execute malicious args blindly.

- Agent-probe v0.6.0 adds critical input validation probes for SQLi, SSRF, path traversal.

- The real security gap is the 200ms between LLM decision and tool run—no framework validation.

Published by

DevTools Feed

Ship faster. Build smarter.

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.