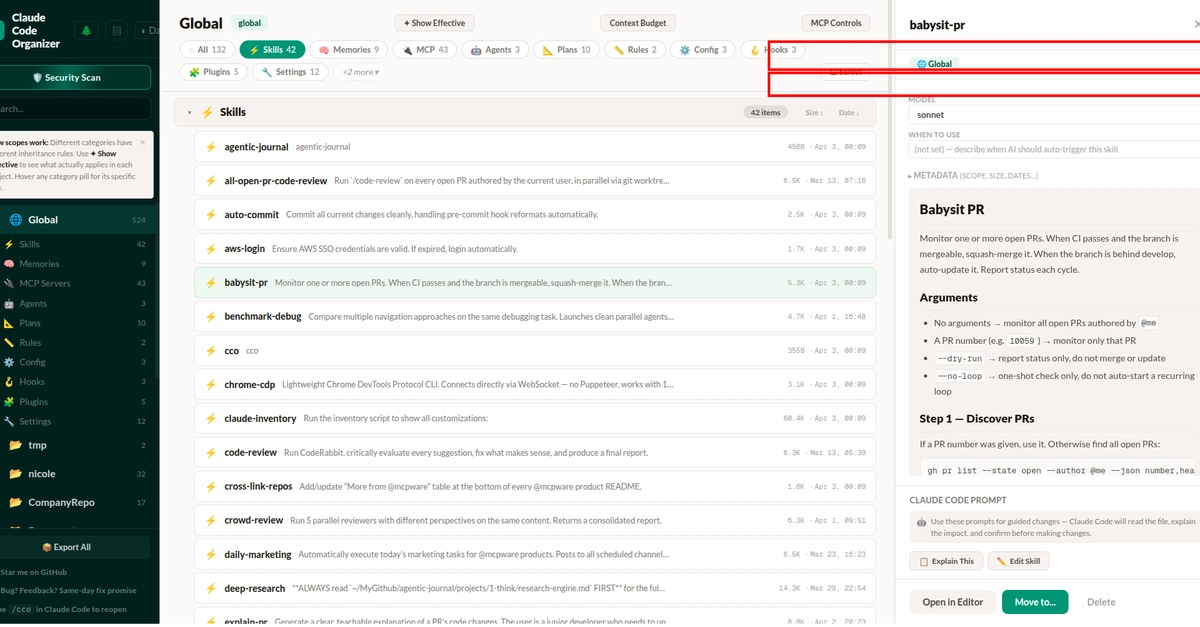

MegaTrain Puts 120B LLMs on a Single H200 GPU – Full Precision, No Offloads

Imagine firing up a 120-billion parameter LLM on a single H200 GPU. MegaTrain makes it real, flipping GPU memory limits with CPU smarts.

⚡ Key Takeaways

- MegaTrain trains 120B LLMs at full precision on a single H200 GPU using CPU memory offload. 𝕏

- Key innovations: pipelined double-buffering and stateless layer templates for 1.84x better throughput than DeepSpeed ZeRO-3. 𝕏

- Architectural shift to memory-centric design could democratize massive model training for smaller teams. 𝕏

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.

Originally reported by Hacker News