EIE: The Local LLM Server That Runs Model Groups in Parallel Without Exploding Your GPU

What if your local AI setup could deliberate like a committee of LLMs, without needing a data center? EIE does just that — and fits it all on a single RTX 4090.

⚡ Key Takeaways

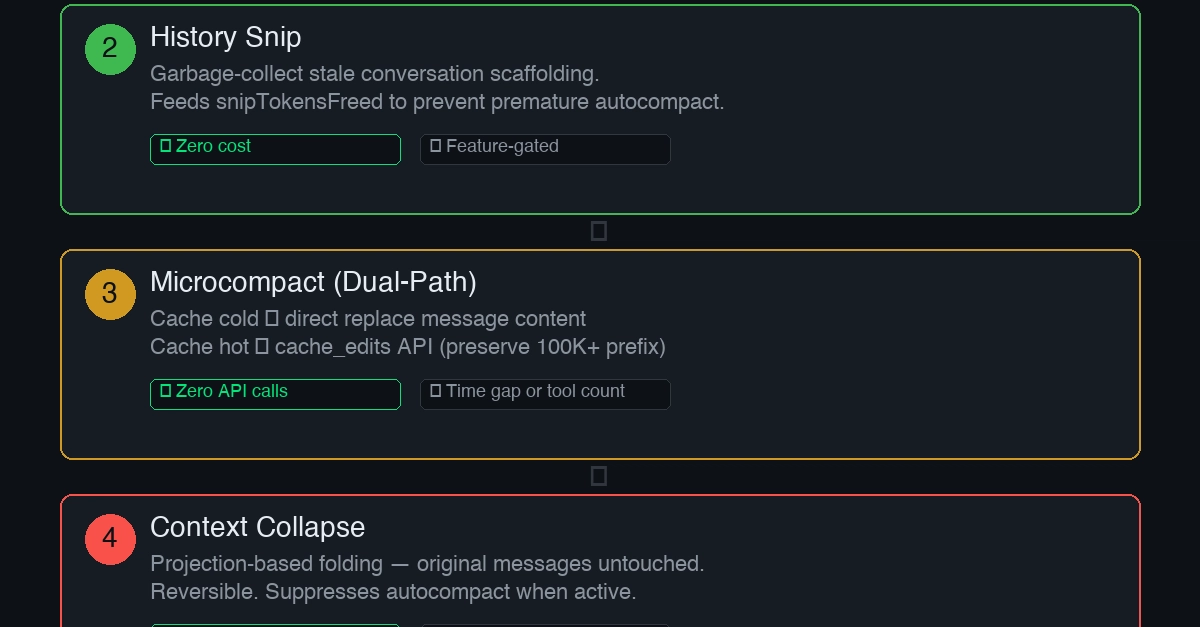

- EIE enables parallel multi-model inference with groups, fallbacks, and pluggable strategies — beyond Ollama or llama.cpp. 𝕏

- TurboQuant delivers 5x KV cache compression, fitting 3-6 LLMs on consumer GPUs like RTX 4090. 𝕏

- GPU-agnostic (Nvidia/AMD/CPU), lightweight 1300-line C++ for edge and production pipelines. 𝕏

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.

Originally reported by dev.to