AI agents are messy. Developers want them to be less messy. Shocking, I know.

But here we are, staring down the barrel of applications that are supposed to be smart assistants but instead act like toddlers who’ve discovered caffeine. The problem isn’t the AI itself, not entirely. It’s how we’re trying to build around it, or rather, fail to build around it. The current approach is frankly, a disaster waiting to happen.

The “Vibe Coding” Trap and Verification Burden

This is where we’re at: developers are essentially winging it with conversational prompts. It’s called “vibe coding.” Sounds great for a jam session, less so for production software. The result? A debugging nightmare. You can’t just trust the output; you have to verify everything. This isn’t efficiency; it’s a colossal waste of time. The original content notes:

In my personal experience, sometimes you have to carefully craft the prompt to guide AI generate a concise output and use formulas to control the quality of AI outputs.

Yeah, no kidding. It’s like trying to build a skyscraper with a rubber hammer. You need structure, not good vibes.

Execution Loops and Environmental Fragility

Then there’s the dreaded infinite loop. Agents, when interacting with the outside world—APIs, data, whatever—get stuck. They hit a snag, like a broken API call or a change in data format, and just… freeze. Or worse, they spiral. Without hard limits, they can’t recover. It’s like giving a self-driving car a learner’s permit and no traffic laws.

The Complexity of Probabilistic Context

Frameworks are pushing for complex RAG pipelines and vector databases to give these agents memory. Sounds fancy, right? In reality, it’s often introducing more problems: latency, unpredictable retrieval failures, and a whole lot of infrastructure overhead. For anything requiring precision, this probabilistic approach is a ticking time bomb. It’s a solution looking for a problem, and the problem it’s creating is worse than the one it’s supposed to solve.

Absolute Predictability

What do developers actually want? Systems that fail predictably. Systems where you can trace exactly why an agent did what it did. Auditability. Deterministic logging. Structured logic. Instead, we’re getting a black box that sometimes does the right thing. The original content hints at the solution:

That is why you have to rely on existing packages on programming languages to build an app specifically tailored to control predictability. For example, let LLM write python code to execute, is a good quality control method.

This isn’t revolutionary; it’s basic engineering. You need control. You need to know what’s happening.

The Real Problem: It’s Still About Engineering

Look, AI agents aren’t magic. They’re tools. And tools need to be built with engineering principles. This isn’t a debate about whether AI is going to take over the world; it’s about whether we can build useful applications with it.

you still need to know what the product should be, what tradeoffs matter, what the architecture should compress around

Exactly. The technology is exciting, but the fundamentals of software design haven’t changed. You still need to understand the problem, the constraints, and the desired outcome. All the fancy LLMs in the world won’t help if the underlying architecture is a house of cards.

Ditch the Vibe, Embrace the Machine

The answer? State machines. Forget the open-ended reasoning loops that lead to chaos. Design your agent’s core as a deterministic state machine. Each state is a clear objective. The LLM’s job isn’t to figure out the entire flow; it’s to process the current state and deliver a precise output that moves the machine to the next step. This is how you get predictable, reliable agents. It’s a standard approach for a reason.

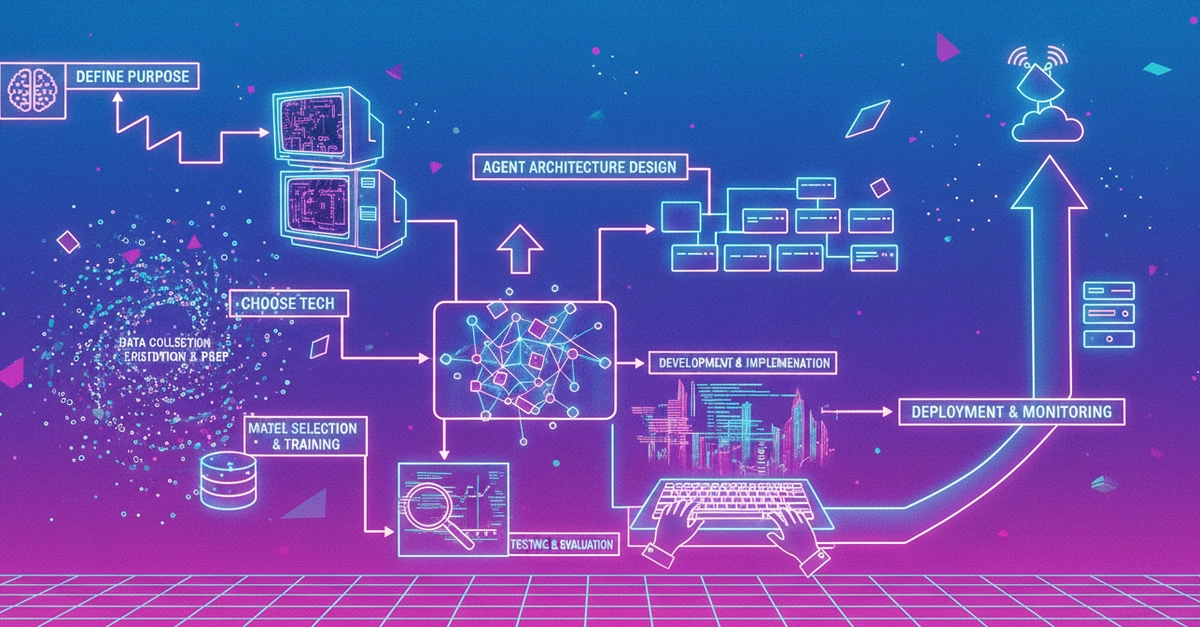

The Tech Stack for Sanity

So, what does this sanity-saving stack look like?

The Brains of the Operation: LLMs

This is where the intelligence comes from. You’ve got two main choices:

- Managed Third-Party APIs: Think OpenAI, Anthropic Claude, Google Gemini. Fast to get started, less control.

- Hosted Open-Source Models: Deepseek, GLM models. Self-host for ultimate data control, more setup.

The Glue: Orchestration Frameworks

These tools connect your logic, your data, and the AI.

- LangChain / LangGraph: The current heavyweights for chaining prompts and building multi-agent systems.

- LlamaIndex: Great for connecting LLMs to specific datasets. Think document assistants.

- Vercel AI SDK: Handy for web apps, especially if you need real-time streaming responses.

The Memory: Databases

Forget traditional databases for semantic search. You need vector databases or relational databases with vector extensions.

- Vector Databases: Pinecone, Milvus, Qdrant. For lightning-fast RAG.

- Relational Databases with Vector Support: PostgreSQL with

pgvectoris a solid choice, handling both user data and AI vectors. - Managed Vector Platforms: Supabase, Neon. Streamline things for smaller teams.

The Engine Room: Backend

This is where your core business logic lives. It handles authorization, data processing, and feeds the AI.

- Python (FastAPI / Django): Still the king for AI backends.

This isn’t just about building an AI app; it’s about building a reliable AI app. The hype is deafening, but the engineering fundamentals remain. If you’re still “vibe coding” your agent’s logic, you’re already behind. Get structured.