🤖 AI Dev Tools

Word Embeddings: From Brittle Counts to Semantic Vectors That Actually Work

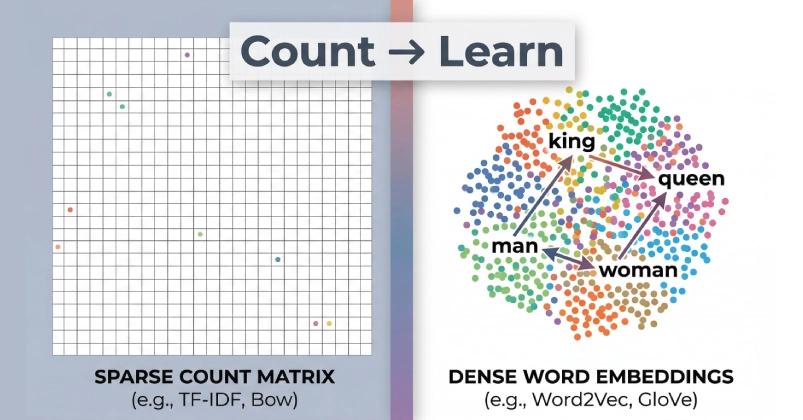

Your next AI app crashes on synonyms? Blame sparse vectors. Dense embeddings fixed that — and built trillion-dollar models.

theAIcatchup

Apr 08, 2026

4 min read

⚡ Key Takeaways

-

Sparse vectors like TF-IDF excel in search but fail on synonyms and generalization.

𝕏

-

Dense embeddings from Word2Vec predict context, enabling vector math for analogies.

𝕏

-

Modern NLP builds on this foundation, but watch for inherited biases in production.

𝕏

The 60-Second TL;DR

- Sparse vectors like TF-IDF excel in search but fail on synonyms and generalization.

- Dense embeddings from Word2Vec predict context, enabling vector math for analogies.

- Modern NLP builds on this foundation, but watch for inherited biases in production.

Published by

theAIcatchup

Ship faster. Build smarter.

Worth sharing?

Get the best Developer Tools stories of the week in your inbox — no noise, no spam.